This post is a rapid behind the scenes of my Hostile Habitat series using MidJourney and how I started this process by figuring out which words were helpful to break, twist and deform matter.

When attempting to generate images that could translate the idea of collapse and climate change disasters, I wanted to explore different tracks than the usual zombie apocalypse and pandemics, which often portrays empty cities and an architecture of segregation, walls and military checkpoints.

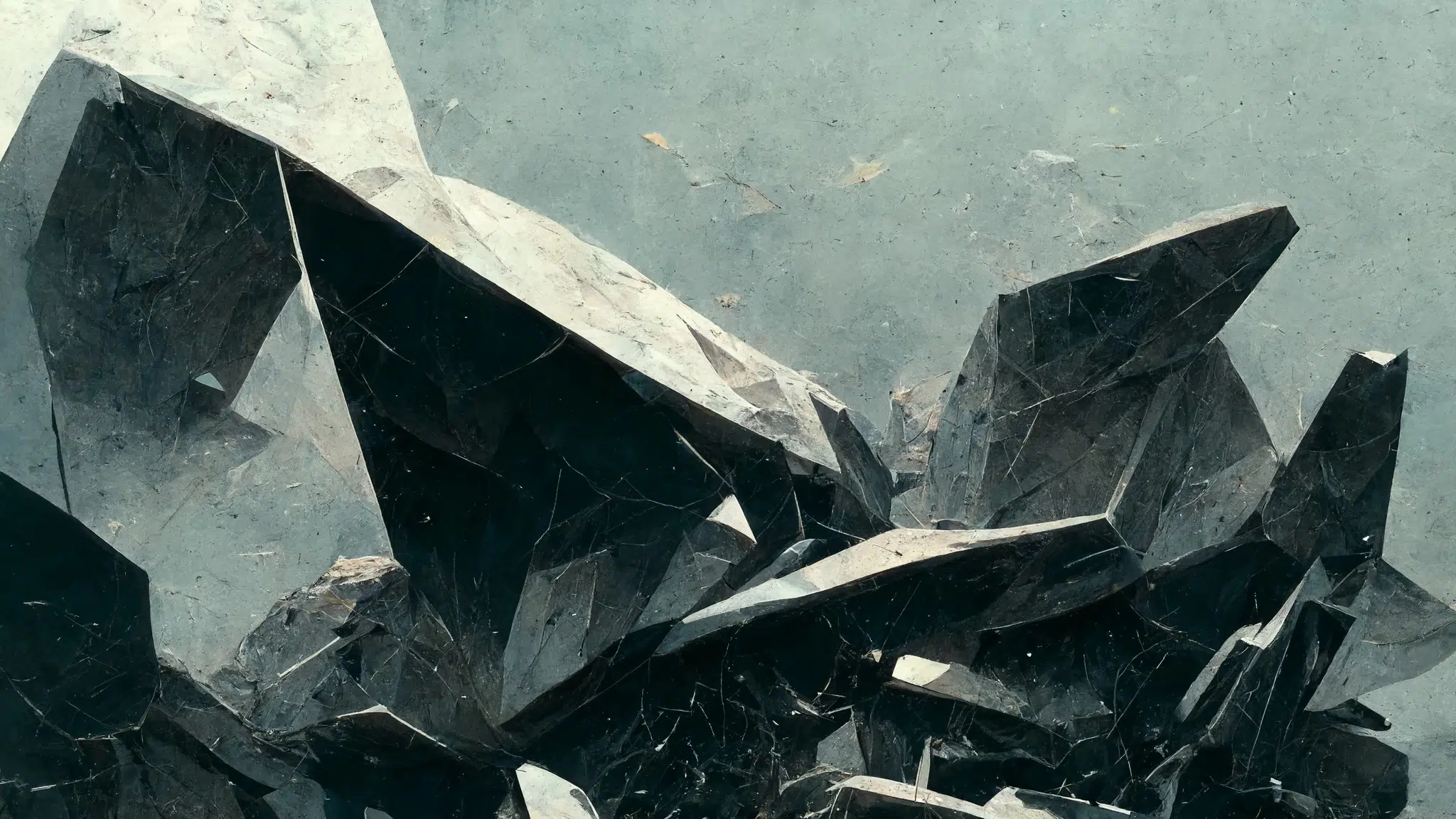

For Hostile Habitat, my aim was different. I wanted to express a form of architectural narrative based on the debris, the camp and the temporary to explore the material grammar of makeshift slum habitats.

My first key understanding of MidJourney was how the UX was built around a generative art process. Features such as reroll, variations, remixes and ratings were more valuable than trying to find the perfect prompt. I have always been way more successful at sending the AI in the right direction using vague instructions and letting it figure out creative proposals through the built-in learning process of its rudimentary yet powerful simple interface.

Words such as smashed, broken parts, alien, stretched and twisted worked very well. The AI also reacts particularly well to moods, feelings and references to ambiences. But overall, the less precise you are, the more MidJourney can surprise and engage in a creative exchange.

To obtain more triangular and sharper shapes, one word has been handy. I often asked the AI to generate forms looking like “the broken part of a F117”, yes the 90s super angular stealth fighter, which allowed me to inject this weird reference into the generation.